University teachers’ perceptions regarding the quality of tasks assessing learning outcomes

DOI:

https://doi.org/10.30827/relieve.v29i1.27404Keywords:

Assessment, Learning, Higher EducationAbstract

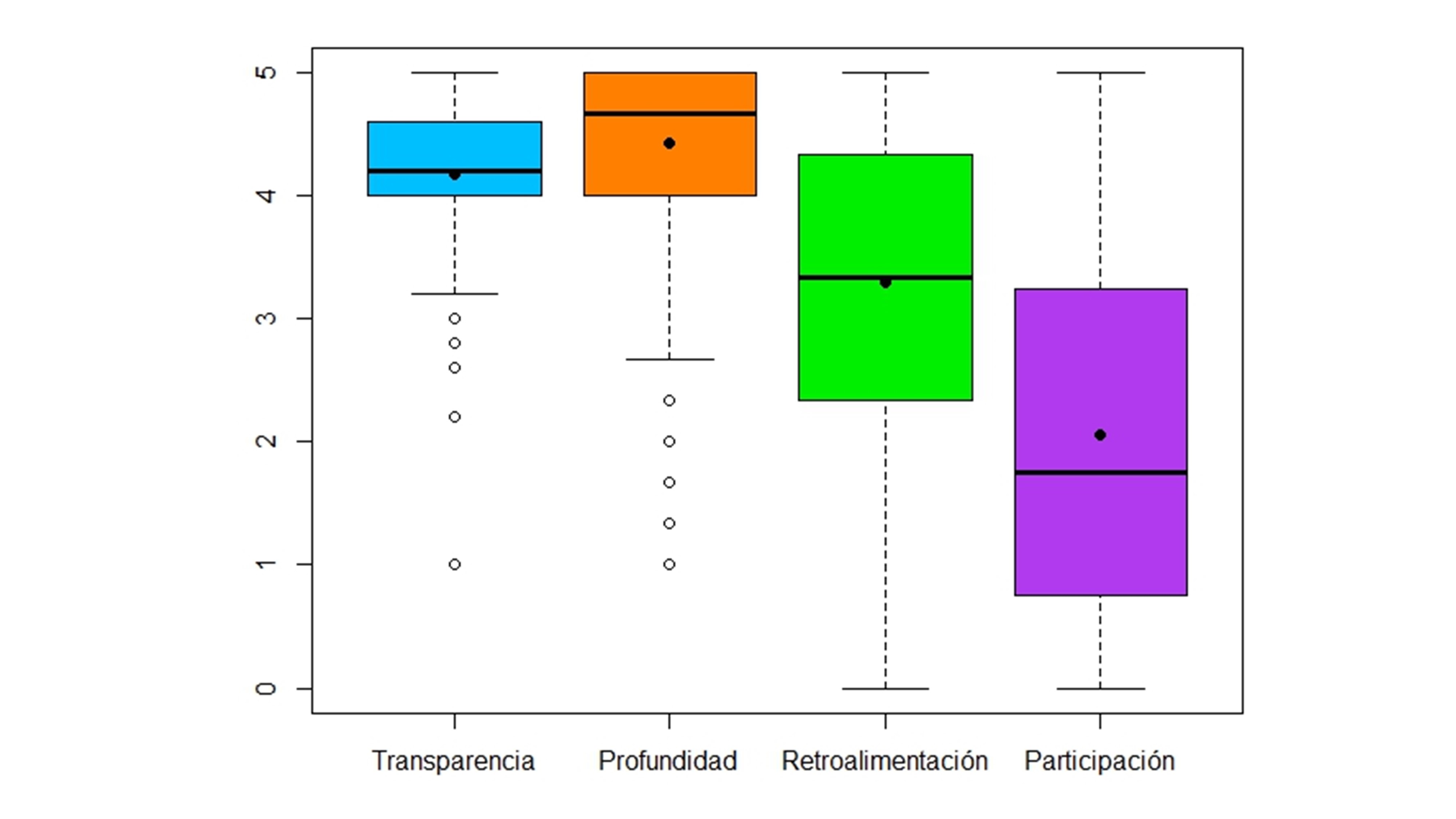

Assessment of whether learning outcomes have been achieved requires teaching, assessment and learning to be constructively aligned, highlighting the importance of designing assessment tasks that meet the necessary quality conditions to strengthen student learning. This study was carried out to analyse university lecturers’ perception of their design characteristics in the assessment tasks as part of their evaluative practice. The study followed a mixed methodology (exploratory sequential design) using the RAPEVA questionnaire -Self-report from teaching staff on their practice in learning outcome assessment. This questionnaire collected opinions from 416 teachers working at six public universities in various Spanish autonomous regions. The transparency, through information provided to the students, and the depth of the tasks are two aspects often mentioned by the teachers. On the other hand, feedback or participation from students in assessment processes are aspects which teachers consider less important. This detects differences in perception depending on the university, the field of knowledge and how secure and satisfied the teachers feel regarding the assessment system. In accordance with the results, future lines of research are suggested that favour greater understanding of evaluative practices in higher education.

Downloads

References

Anderson, M. J. (2017). Permutational Multivariate Analysis of Variance (PERMANOVA). Wiley StatsRef: Statistics Reference Online, 1–15. https://doi.org/10.1002/9781118445112.stat07841

Bearman, M., Dawson, P., Boud, D., Bennett, S., Hall, M., & Molloy, E. (2016). Support for assessment practice: developing the Assessment Design Decisions Framework. Teaching in Higher Education, 21(5), 545–556. https://doi.org/10.1080/13562517.2016.1160217

Bearman, M., Dawson, P., Boud, D., Hall, M., Bennett, S., Molloy, E., & Joughin, G. (2014). Guide to the assessment design decisions framework. http://www.assessmentdecisions.org/guide/

Biggs, J., & Tang, C. (2011). Teaching for quality learning at university. What the students does (4th ed.). McGraw-Hill-SRHE & Open University Press.

Boud, D. (2020). Challenges in reforming higher education assessment: a perspective from afar. RELIEVE, 26(1), art. M3. https://doi.org/10.7203/relieve.26.1.17088

Boud, D. (2022). Assessment-as-learning for the development of students’ evaluative judgement. In Z. Yan & L. Yang (Eds.), Assessment as Learning. Maximising Opportunities for Student Learning and Achievement (pp. 25–37). Routledge. https://doi.org/10.4324/9781003052081-3

Boud, D., & Dawson, P. (2021). What feedback literate teachers do: an empirically-derived competency framework. Assessment & Evaluation in Higher Education, April, 1–14. https://doi.org/10.1080/02602938.2021.1910928

Carless, D. (2015). Exploring learning-oriented assessment processes. Higher Education, 69(6), 963–976. https://doi.org/10.1007/s10734-014-9816-z

Carless, D. (2022). From teacher transmission of information to student feedback literacy: Activating the learner role in feedback processes. Active Learning in Higher Education, 23(2), 143–153. https://doi.org/10.1177/1469787420945845

Carless, D., & Boud, D. (2018). The development of student feedback literacy: enabling uptake of feedback. Assessment & Evaluation in Higher Education, 43(8), 1315–1325. https://doi.org/10.1080/02602938.2018.1463354

Carless, D., & Winstone, N. (2020). Teacher feedback literacy and its interplay with student feedback literacy. Teaching in Higher Education, 1–14. https://doi.org/10.1080/13562517.2020.1782372

DeLuca, C., & Johnson, S. (2017). Developing assessment capable teachers in this age of accountability. Assessment in Education: Principles, Policy and Practice, 24(2), 121–126. https://doi.org/10.1080/0969594X.2017.1297010

Falchikov, N. (2005). Improving assessment through student involvement. Practical solutions for aiding learning in higher education and further education. RoutledgeFalmer.

Falchikov, N., & Goldfinch, J. (2000). Student peer assessment in higher education: A Meta-analysis comparing peer and teacher marks. Review of Educational Research, 70(3), 287-322. https://doi.org/10.3102/00346543070003287

Gómez-Ruiz, M. Á., Ibarra-Sáiz, M. S., & Rodríguez-Gómez, G. (2020). Aprender a evaluar mediante juegos de simulación en educación superior: percepciones y posibilidades de transferencia para los estudiantes. Revista Iberoamericana de Evaluación Educativa, 13(1), 157–181. https://doi.org/10.15366/riee2020.13.1.007

Hair, J. F., Hult, G. T. M., Ringle, C. M., & Sarstedt, M. (2022). A primer on partial Least squares structural equation modeling (PLS-SEM) (3rd ed.). Sage. https://doi.org/10.1007/978-3-030-80519-7

Henderson, M., Boud, D., Molloy, E., Dawson, P., Phillips, M., Ryan, T., & Mahoney, P. (2018). Feedback for learning: Closing the assessment loop. Department of Education and Training. https://nla.gov.au/nla.obj-719788718/view

Henseler, J. (2021). Composite-based structural equation modeling. Analyzing latent and emergent variables. Guilford Press.

Hortigüela Alcalá, D., Palacios Picos, D., & López Pastor, V. (2019). The impact of formative and shared or co-assessment on the adquisition of transversal competences in higher education. Assessment & Evaluation in Higher Education, 44(6), 933–945. https://doi.org/10.1080/02602938.2018.15 30341

Ibarra-Sáiz, M. S., & Rodríguez-Gómez, G. (2020). Evaluando la evaluación. Validación mediante PLS-SEM de la escala ATAE para el análisis de las tareas de evaluación. RELIEVE, 26(1), art. M4. https://doi.org/10.7203/relieve.26.1.17403

Ibarra-Sáiz, M.S., & Rodríguez-Gómez, G. (2017). EvalCOMIX®: A web-based programme to support collaboration in assessment. In T. Issa, P. Kommers, T. Issa, P. Isaías, & T. B. Issa (Eds.), Smart technology applications in business environments (pp. 249–275). IGI Global. https://doi.org/10.4018/978-1-5225-2492-2.ch012

Ibarra-Sáiz, M.S., Rodríguez-Gómez, G., & Boud, D. (2021). The quality of assessment tasks as a determinant of learning. Assessment & Evaluation in Higher Education, 46(6), 943–955. https://doi.org/10.1080/02602938.2020.1828268

Ibarra-Sáiz, M.S., Rodríguez-Gómez, G., Lukas-Mujika, J.F., & Santos-Berrondo, A. (2023). Medios e instrumentos para evaluar los resultados de aprendizaje en másteres universitarios. Análisis de la percepción del profesorado sobre su práctica evaluativa. Educación XX1, 26(1), 21-45. https://doi.org/10.5944/educxx1.33443

JASP Team. (2022). JASP (Version 0.16.1). https://jasp-stats.org/

Johnson, R. L., & Morgan, G. B. (2016). Survey scales. A guide to development, analysis, and reporting. The Guilford Press.

Lipnevich, A. A., & Panadero, E. (2021). A Review of Feedback Models and Theories: Descriptions, Definitions, and Conclusions. Frontiers in Education, 6(December). https://doi.org/10.3389/feduc.2021.720195

Lo, Y. Y., & Leung, C. (2022). Conceptualising assessment literacy of teachers in content and language integrated learning programmes. International Journal of Bilingual Education and Bilingualism, 1–19. https://doi.org/10.1080/13670050.2022.2085028

López Gil, M., Gómez Ruiz, M. Á., Vázquez Recio, R., & Ruiz Romero, A. (2022). La pesadilla de la evaluación: Análisis de los sueños de estudiantes universitarios. Revista Iberoamericana de Evaluación Educativa, 15(1), 139–159. https://doi.org/10.15366/riee2022.15.1.008

Mehrabi Boshrabadi, A., & Hosseini, M. R. (2021). Designing collaborative problem solving assessment tasks in engineering: an evaluative judgement perspective. Assessment & Evaluation in Higher Education, 46(6), 913–927. https://doi.org/10.1080/02602938.2020.1836122

Panadero, E., & Alqassab, M. (2019). An empirical review of anonymity effects in peer assessment, peer feedback, peer review, peer evaluation and peer grading. Assessment & Evaluation in Higher Education, 44(8), 1253–1278. https://doi.org/10.1080/02602938.2019.1600186

Panadero, E., Fraile, J., & García-Pérez, D. (2022). Transición a educación superior y evaluación: un estudio longitudinal anual. Educación XX1, 25(2), 15–37. https://revistas.uned.es/index.php/educacionXX1/article/view/29870

Panadero, E., Jonsson, A., & Botella, J. (2017). Effects of self-assessment on self-regulated learning and self- efficacy: Four meta-analyses. Educational Research Review, 22, 74–98. https://doi.org/10.1016/j.edurev.2017.08.004

Quesada-Serra, V., Gómez Ruiz, M. A., Gallego Noche, M. B., & Cubero-Ibáñez, J. (2019). Should I use co-assessment in higher education? Pros and cons from teachers and students’ perspectives. Assessment & Evaluation in Higher Education, 44(7), 987-1002. https://doi.org/10.1080/02602938.2018.1531970

R Core Team. (2021). R: A language and environment for statistical computing. R Foundation for Statistical Computing. https://www.r-project.org/

Rodríguez-Gómez, G., & Ibarra-Sáiz, M. S. (2015). Assessment as learning and empowerment: Towards sustainable learning in higher education. In M. Peris-Ortiz & J. M. Merigó Lindahl (Eds.), Sustainable learning in higher education. Developing competencies for the global marketplace (pp. 1–20). Springer International Publishing. https://doi.org/10.1007/978-3-319-10804-9_1

Rodríguez-Gómez, G., & Ibarra-Sáiz, M. S. (2016). Towards sustainable assessment: ICT as a facilitator of self- and peer assessment. In M. Peris-Ortiz, J. A. Gómez, F. Vélez-Torres, & C. Rueda-Armengot (Eds.), Education tools for entrepreneurship (pp. 55–71). Springer International Publishing. https://doi.org/10.1007/978-3-319-24657-4_5

Sambell, K., McDowell, L., & Montgomery, C. (2013). Assessment for Learning in Higher Education. Routledge. https://doi.org/10.4324/9780203818268

Tai, J., Ajjawi, R., Boud, D., Dawson, P., & Panadero, E. (2018). Developing evaluative judgement: enabling students to make decisions about the quality of work. Higher Education, 76(3), 467–481. https://doi.org/10.1007/s10734-017-0220-3

Yan, Z., & Boud, D. (2022). Conceptualising assessment-as-learning. In Z. Yan & L. Yang (Eds.), Assessment as learning. Maximising opportunities for student learning and achievement (pp. 11–24). Routledge. https://doi.org/10.4324/9781003052081-2

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2023 RELIEVE – Electronic Journal of Educational Research and Evaluation

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

The authors grant non-exclusive rights of exploitation of works published to RELIEVE and consent to be distributed under the Creative Commons Attribution-Noncommercial Use 4.0 International License (CC-BY-NC 4.0), which allows third parties to use the published material whenever the authorship of the work and the source of publication is mentioned, and it is used for non-commercial purposes.

The authors can reach other additional and independent contractual agreements, for the non-exclusive distribution of the version of the work published in this journal (for example, by including it in an institutional repository or publishing it in a book), as long as it is clearly stated that the Original source of publication is this magazine.

Authors are encouraged to disseminate their work after it has been published, through the internet (for example, in institutional archives online or on its website) which can generate interesting exchanges and increase work appointments.

The fact of sending your paper to RELIEVE implies that you accept these conditions.